The theory of Anthropogenic Global Warming, so far as we understand it, consists of the following two assertions.

(1) If we increase the concentration of CO2 in the atmosphere to 600 ppmv (parts per million by volume), we will cause the world to warm up by at least 2°C. (The concentration in pre-industrial times was 300 ppmv and is currently 400 ppmv.)

(2) If we continue burning fossil fuels at our current rate, emitting 10 petagrams of carbon into the atmosphere every year, we will raise the concentration of CO2 in the atmosphere to 600 ppmv within the next one hundred years.

We can falsify the second assertion using our observations of the carbon-14. We present a detailed analysis of atmospheric carbon-14 in a series of posts starting with Carbon-14: Origins and Reservoir. Here we present a summary, with approximate numerical values that are easy to remember.

Each year, cosmic rays create 8 kg of carbon-14 in the upper atmosphere. If carbon-14 were a stable atom, all carbon in the Earth's atmosphere would be carbon-14. But carbon-14 is not stable. One in eight thousand carbon-14 atoms decays each year. The rate at which the Earth's inventory of carbon-14 decays must be equal to the rate at which it is created. There must be 64,000 kg of carbon-14 on Earth.

The Earth's atmosphere contains 800 Pg of carbon (1 Pg = 1 Petagram = 1012 kg) bound up in gaseous CO2. One part per trillion of this carbon is carbon-14 (1 ppt = 1 part in 1012). There are 800 kg of carbon-14 in the atmosphere. That leaves 63,200 kg of the total inventory somewhere else. We'll call this "somewhere else" the carbon-14 reservoir.

Each year, 8 kg of carbon-14 is created in the atmosphere by cosmic rays, and each year the atmosphere loses 8 kg of carbon-14 to the reservoir. (Here we are ignoring the 0.1 kg of atmospheric carbon-14 that decays each year.) There is no chemical reaction that can separate carbon-14 from normal carbon. Every 1 kg of carbon-14 that leaves the atmosphere for the reservoir will be accompanied by 1 Pg of normal carbon.

Consider the atmosphere before we began to add 10 Pg of carbon to it each year. The mass of carbon in the atmosphere is constant. If 1 Pg of carbon leaves the atmosphere and enters the reservoir, 1 Pg of carbon must go in the opposite direction, leaving the reservoir and entering the atmosphere.

The only way for there to be a net loss of carbon-14 from the atmosphere to the reservoir is if the concentration of carbon-14 in the reservoir is lower than in the atmosphere. The only place on Earth that is capable of acting as the reservoir is the deep ocean, in which the concentration of carbon-14 is 80% of the concentration in the atmosphere. Each year 40 Pg of carbon leaves the atmosphere and enters the deep ocean, carrying with it 40 kg of carbon-14, while 40 Pg of carbon leaves the ocean and enters the atmosphere, carrying with it 32 kg of carbon-14. The result is a net flow of 8 kg/yr of carbon-14 into the ocean. Furthermore, the ocean contains 63,200 kg of carbon-14 in concentration 0.8 ppt, so the total mass of carbon in the oceans is roughly 80,000 Pg.

With the ocean and the atmosphere in equilibrium, 40 Pg of carbon is absorbed by the ocean each year, and 40 kg is released by the ocean. If we were to double the quantity of carbon in the atmosphere, we would double the amount absorbed by the ocean each year. Instead of 40 Pg being absorbed each year, 80 Pg would be absorbed. We could double the concentration of carbon in the atmosphere by emitting 40 Pg/yr. But we emit only 10 Pg/yr. Our emissions are sufficient to increase the mass of carbon in the atmosphere by 25%, after which everything we emit will be absorbed by the oceans. The oceans contain 80,000 Pg of carbon. If we add 10 Pg/yr, it will take roughly eight thousand years to double the carbon concentration in the oceans, after which the concentration in the atmosphere will double also.

Back in the 1960s, atmospheric nuclear bomb tests doubled the concentration of carbon-14 in the atmosphere. Such tests stopped in 1967. In our more precise calculation we predict that the concentration of carbon-14 must relax after 1967 with a time constant of 17 years, so that it would be 1.37 ppt in 1984 and 1.05 ppt in 2018. The concentration did relax afterwards, with a time constant of roughly 15 years, and in 2016, the carbon-14 concentration in the atmosphere is indistinguishable from its value before the bomb tests. During that time, almost every CO2 molecule that existed in the atmosphere in 1967 passed into the ocean and was replaced by another from the ocean. Anyone claiming that our carbon emissions will remain in the atmosphere for thousands of years, such as the author of this article, is wrong. If we stopped burning fossil fuels tomorrow, the CO2 concentration of the atmosphere would return to its pre-industrial value within fifty years.

When carbon is absorbed or emitted by the ocean, it does so as a molecule of CO2. Statistical mechanics dictates that the rate of absorption is weakly dependent upon temperature, but the rate of emission is strongly dependent upon temperature. When we calculate the effect of temperature upon the equilibrium between the ocean and the atmosphere, we conclude that a 1°C warming of the oceans will cause a 10 ppmv increase in the concentration of CO2 in the atmosphere. When we look back at the record of CO2 concentration and temperature over the past 400,000 years, we see the correlation we expect, with the magnitude of the changes in good agreement with our prediction. For a 12°C increase in temperature, for example, the concentration of CO2 increases by 110 ppmv.

If we consider the atmosphere of the Earth in pre-industrial times, its atmospheric CO2 concentration was roughly 300 ppmv. A more exact value for the creation of carbon-14 is 7.5 kg/yr and we conclude that 37 Pg/yr or carbon was being absorbed and emitted by the ocean. When we add 10 Pg/yr human emissions from burning fossil fuels, we expect the concentration of CO2 in the atmosphere to rise by 27% to 380 ppmv, which is close to the 400 ppmv we observe.

Our analysis of the carbon cycle makes three independent and unambiguous predictions all of which turn out to be correct to within ±10%. Our analysis is reliable, and it tells us that it will take roughly eight thousand years to double the CO2 concentration of the atmosphere if we continue burning fossil fuels at our current rate. Assertion (2) above is wrong by two orders of magnitude. The theory of Anthropogenic Global Warming, as stated above, is untrue.

POST SCRIPT: Assertion (1) is harder to falsify, and we do not claim to have done so in a manner convincing to all readers. Nevertheless, we did conclude that assertion (1) had to be wrong in our series of posts on the greenhouse effect, which we summarize in Anthropogenic Global Warming. We calculated that doubling the CO2 concentration of the atmosphere will cause the Earth to warm up by 1.5°C, provided we ignore changes in water vapor and cloud cover. As the world warms up, however, water evaporates more quickly from the oceans, and we get more clouds. Clouds reflect sunlight. The warming effect of doubling CO2 concentration is reduced by an increase in cloud cover. Clouds stabilize the Earth's temperature because they become more frequent as the Earth warms up, and less frequent as it cools down. Our simulation of the atmosphere with clouds suggests that the actual warming caused by a doubling of CO2 will be 0.9°C. So far as we can tell, the climate models used by the majority of climate scientists do not account for the increase in cloud cover that occurs as the world warms up. But they do account for the increase in water vapor in the atmosphere. Clouds cool the world, but water vapor is another greenhouse gas, and warms the world. By including water vapor but excluding the increasing cloud cover, these climate models conclude that the effect of doubling CO2 concentration will be 2°C or larger.

POST POST SCRIPT: Some readers suggest that the atmosphere-ocean system cannot be modeled with linear diffusion because the dissolved CO2 does not increase in proportion to atmospheric CO2 concentration. We address and reject their claim in an update to Probability of Exchange. They claim that Henry's Law does not apply to CO2 and seawater, despite many measurements to the contrary, such as Tsui et al., and general acceptance of Henry's Law in academic texts.

Showing posts with label Global Surface. Show all posts

Showing posts with label Global Surface. Show all posts

Sunday, October 9, 2016

Wednesday, April 4, 2012

Conclusion

The anthropogenic global warming (AGW) hypothesis presented by the majority of today's climatologists has two parts. First it claims that the world is getting exceptionally warm, and second it claims that human carbon dioxide (CO2) emissions are the cause of this warming. Seven years ago, we began our personal investigation of this hypothesis, and we did so by considering whether or not the world was indeed getting exceptionally warm.

The first thing we did was estimate the uncertainty inherent in the measurements of global surface temperature. We concluded that natural variations in local climate introduce an error of roughly 0.14°C in the measurement of the change in temperature between any two points in time. The fact that the error is constant with the time over which we measure the change is a consequence of the particular characteristics of local climate fluctuations.

We downloaded the weather station data from NCDC and calculated the global surface anomaly using a method we called integrated derivatives, but which others have called first differences. The graph we obtained was almost identical to the one obtained by CRU using their complex reference grid method. It remains a mystery to us why institutions like CRU, NASA, and NCDC use such a complex method when a far simpler one will do. All graphs show roughly a 0.6°C rise in global surface temperature from 1950 to 2000. This rise is significant compared to our expected resolution of 0.14°C.

We made this plot superimposing the number of weather stations and the global surface anomaly versus time. The number of weather stations drops dramatically from 1960 and 1990. Only one in four remain active at the end of this thirty-year period. During the same period, the global surface anomaly shows a 0.6°C rise. By selecting subsets of the weather stations, we found that the apparent warming from 1950 to 1990 varied from 0.3°C to 1.0°C depending upon whether we used stations that disappeared in that period, persisted through that period, or existed shorter or longer intervals in the same century. Thus is seemed to us that some significant amount of work would have to be done to eliminate the change in the number of weather stations as a source of error in the data. But we saw no mention whatsoever of this source of error in published papers in which the global surface anomaly is presented, such as Jones et al..

We plotted a global map of the available weather stations, color-coded to show the date they first started reporting. The map shows that almost all stations in the tropics began operating after 1930, while most of those in the temperate regions were operating by 1880. This seems to us to be another source of systematic error in our measurement of the global surface anomaly.

Weather stations might also be affected by the appearance of buildings, tarmac, and road traffic. We found examples of weather stations in which such urban heating caused an apparent warming of several degrees centigrade over a few decades. It seemed to us that this effect would have to be examined in depth by any paper presenting a global surface trend. But papers such as Jones et al. do not address the urban heating issue directly. Instead, they claim that the effect is negligible and refer to other papers as proof. But when we looked up those other papers, we did not find any such proof.

In order to argue that modern temperatures were exceptionally warm, climatologists produced the hockey stick graph, in which a collection of potential long-term measurements of global surface temperature were combined together under the assumption that they could be trusted only to the extent that they showed a temperatures increase from 1950 to 2000. Indeed, if a measurement showed a temperature decline in that period, the hockey stick method would flip the trend over and add it to the combination so that it now contributed to a rise in the same period.

The hockey-stick graph shows no sign of the Medieval Warm Period, in which Greenland was inhabited by farmers, nor the Little Ice Age, when the Thames was known to freeze over, and nor should we expect it to. Given a random set of measurements, the hockey-stick combination method will almost always produce a graph that shows a sharp rise from 1950 to 2000 and a gentle descent during the thousand years before-hand. When applied to the existing measurements of temperature by tree rings, ice cores, and other such indirect methods, it is no surprise that the method produced that same shape.

We presented our doubts about the surface temperature measurements and the hockey stick graph to believers in the AGW hypothesis. We were received with disdain and given no satisfactory answers. Furthermore, the Climategate affair revealed several significant breaches of scientific method by the climate science community. For example, in this graph produced by climatologists for the World Health Organization, the authors removed the tree ring temperature data from 1960 onwards because it showed a decline in temperature, and substituted temperature station measurements in their place. They plotted the combination as a single line. When I asked a prominent climatologists what exactly had been done, he said, "The smooth was calculated using instrumental data past 1960." He declared that a better way to handle the divergence of the tree-ring data from the station measurements would be to cut short the graph of tree-ring data at 1960, so as to hide the decline in temperatures measured by the tree rings.

What we see here is the assumption by climatologists that the world has been warming up and that the global temperature measured by weather stations is correct. This assumption leads them to delete conflicting data on the grounds that it must be bad data. Thus it becomes impossible for them to discover that their assumption is incorrect. By this time, we were skeptical of the global surface anomaly we obtained from the station data. We were no longer certain that the data itself had not been modified by NCDC. We had little reason to trust any other measurement produced by climatologists, we were unimpressed with the hockey-stick method of combining measurements, and we were quite certain that recent temperatures were not exceptional for the past ten thousand years.

We turned our attention to the second part of the AGW hypothesis: the one that says doubling the atmosphere's CO2 concentration will increase the surface temperature by roughly 3°C. It took us a long time to come to a conclusion on this one. The climate models upon which such predictions are based are private property of various climatologists. In any event, we do not trust models produced by a community that is willing to delete data that conflict with its assumptions. If they are willing to delete data, we must assume that they are willing to adjust their models until the models give predictions consistent with their AGW hypothesis.

We began with some laboratory experiments on radiation. We stated the principle of the greenhouse effect. After a great deal of searching around, we eventually obtained the absorption spectrum of various layers of the Earth's atmosphere. This allowed us to confirm that, if the skies remained clear, a doubling of CO2 concentration would cause the world to warm up by about 1.5°C.

But of course the skies don't remain clear. The formation of clouds is a strong function of surface temperature. If the world warms up, there will be more clouds. They will reflect more of the Sun's light, while at the same time, slowing down the radiation of heat into space by the Earth. To determine how these two effects would interact, we built our own climate model, which we called Circulating Cells.

When it comes to determining the effect of increased cloud cover, the most critical parameter to decide upon is the reflection of sunlight by clouds per millimeter of water depth in the cloud. It seemed to us that there should be a large body of literature written recently upon this subject because it is so important to climate modeling. The best paper we found upon the subject was written in 1948, Reflection, Absorption, and Transmission of Insolation by Stratus Cloud. We found a couple of more recent papers about reflection, such as this one, but they do not attempt to provide an empirical formula for the reflection of clouds with increasing cloud depth. We concluded that climatologists are not examining this issue in detail.

In a long sequence of small steps, we built up our climate model until it implemented surface convection, surface heat capacity, evaporation, cloud formation, precipitation, and radiation by clouds. We tested every aspect of the simulation in detail, and based its operating parameters upon our own estimates and upon whatever measurements we could find in climate science journals. We did not choose our model parameters to suit any hypothesis of our own, nor could we have done, because we did not have a model capable of testing the AGW hypothesis until the final stage, and we did not change the parameters in that final stage.

The latest version of our climate model shows that cloud cover increases rapidly as the surface warms above the freezing point of water. The evaporation rate of water from the surface increases approximately as the square of the temperature above freezing, and the only way for water to return to the surface is to form a cloud first. If we ignore the increased reflection of sunlight due to increasing cloud cover, and consider only the slowing-down of radiation into space by the same increase in cloud cover, our model shows roughly 3°C of warming due to a doubling in CO2 concentration. But when we take account of the increased reflection of sunlight by the increasing cloud cover, the warming drops to 0.9°C.

It seems to us that the climate models used by climatologists ignore the reflection of sunlight due to clouds. They may allow for some fixed fraction of sunlight to be reflected by clouds, but they do not allow this fraction to increase with increasing surface temperature. Thus they conclude that the warming due to CO2 doubling will be 3°C. If they took account of the increased reflection, the effect would be far smaller and less dramatic: roughly 1°C.

Doubling the CO2 concentration of the atmosphere will indeed encourage the world to warm up, but not by enough that we should worry. Right now CO2 concentration has increased from roughly 300 ppm to 400 ppm in the past century. If it gets to 600 ppm then we can say that the rise in CO2 concentration will tend to warm the Earth by 1°C. But we are unlikely to be able to check our calculations, because the natural variation in the Earth's climate is itself of order ±1°C from one century to the next.

And so we find ourselves at the end of our journey. Modern warming is not exceptional, and doubling the CO2 concentration will cause the world to warm up by roughly 1°C, not 3°C. The only part of the AGW theory we have not investigated is its assertion that human CO2 emissions are responsible for the increase in atmospheric CO2 concentration over the past century.

My thanks to those of you who took part in the effort, both by private e-mails and in the comments. I would not have continued the effort without your participation. I hope it is clear that my use of "we" instead of "I" is in recognition of the fact that this has been a group effort. I will continue to answer comments on this site, and I will consider any suggestions of further work. To the first approximation, however: we're done.

The first thing we did was estimate the uncertainty inherent in the measurements of global surface temperature. We concluded that natural variations in local climate introduce an error of roughly 0.14°C in the measurement of the change in temperature between any two points in time. The fact that the error is constant with the time over which we measure the change is a consequence of the particular characteristics of local climate fluctuations.

We downloaded the weather station data from NCDC and calculated the global surface anomaly using a method we called integrated derivatives, but which others have called first differences. The graph we obtained was almost identical to the one obtained by CRU using their complex reference grid method. It remains a mystery to us why institutions like CRU, NASA, and NCDC use such a complex method when a far simpler one will do. All graphs show roughly a 0.6°C rise in global surface temperature from 1950 to 2000. This rise is significant compared to our expected resolution of 0.14°C.

We made this plot superimposing the number of weather stations and the global surface anomaly versus time. The number of weather stations drops dramatically from 1960 and 1990. Only one in four remain active at the end of this thirty-year period. During the same period, the global surface anomaly shows a 0.6°C rise. By selecting subsets of the weather stations, we found that the apparent warming from 1950 to 1990 varied from 0.3°C to 1.0°C depending upon whether we used stations that disappeared in that period, persisted through that period, or existed shorter or longer intervals in the same century. Thus is seemed to us that some significant amount of work would have to be done to eliminate the change in the number of weather stations as a source of error in the data. But we saw no mention whatsoever of this source of error in published papers in which the global surface anomaly is presented, such as Jones et al..

We plotted a global map of the available weather stations, color-coded to show the date they first started reporting. The map shows that almost all stations in the tropics began operating after 1930, while most of those in the temperate regions were operating by 1880. This seems to us to be another source of systematic error in our measurement of the global surface anomaly.

Weather stations might also be affected by the appearance of buildings, tarmac, and road traffic. We found examples of weather stations in which such urban heating caused an apparent warming of several degrees centigrade over a few decades. It seemed to us that this effect would have to be examined in depth by any paper presenting a global surface trend. But papers such as Jones et al. do not address the urban heating issue directly. Instead, they claim that the effect is negligible and refer to other papers as proof. But when we looked up those other papers, we did not find any such proof.

In order to argue that modern temperatures were exceptionally warm, climatologists produced the hockey stick graph, in which a collection of potential long-term measurements of global surface temperature were combined together under the assumption that they could be trusted only to the extent that they showed a temperatures increase from 1950 to 2000. Indeed, if a measurement showed a temperature decline in that period, the hockey stick method would flip the trend over and add it to the combination so that it now contributed to a rise in the same period.

The hockey-stick graph shows no sign of the Medieval Warm Period, in which Greenland was inhabited by farmers, nor the Little Ice Age, when the Thames was known to freeze over, and nor should we expect it to. Given a random set of measurements, the hockey-stick combination method will almost always produce a graph that shows a sharp rise from 1950 to 2000 and a gentle descent during the thousand years before-hand. When applied to the existing measurements of temperature by tree rings, ice cores, and other such indirect methods, it is no surprise that the method produced that same shape.

We presented our doubts about the surface temperature measurements and the hockey stick graph to believers in the AGW hypothesis. We were received with disdain and given no satisfactory answers. Furthermore, the Climategate affair revealed several significant breaches of scientific method by the climate science community. For example, in this graph produced by climatologists for the World Health Organization, the authors removed the tree ring temperature data from 1960 onwards because it showed a decline in temperature, and substituted temperature station measurements in their place. They plotted the combination as a single line. When I asked a prominent climatologists what exactly had been done, he said, "The smooth was calculated using instrumental data past 1960." He declared that a better way to handle the divergence of the tree-ring data from the station measurements would be to cut short the graph of tree-ring data at 1960, so as to hide the decline in temperatures measured by the tree rings.

What we see here is the assumption by climatologists that the world has been warming up and that the global temperature measured by weather stations is correct. This assumption leads them to delete conflicting data on the grounds that it must be bad data. Thus it becomes impossible for them to discover that their assumption is incorrect. By this time, we were skeptical of the global surface anomaly we obtained from the station data. We were no longer certain that the data itself had not been modified by NCDC. We had little reason to trust any other measurement produced by climatologists, we were unimpressed with the hockey-stick method of combining measurements, and we were quite certain that recent temperatures were not exceptional for the past ten thousand years.

We turned our attention to the second part of the AGW hypothesis: the one that says doubling the atmosphere's CO2 concentration will increase the surface temperature by roughly 3°C. It took us a long time to come to a conclusion on this one. The climate models upon which such predictions are based are private property of various climatologists. In any event, we do not trust models produced by a community that is willing to delete data that conflict with its assumptions. If they are willing to delete data, we must assume that they are willing to adjust their models until the models give predictions consistent with their AGW hypothesis.

We began with some laboratory experiments on radiation. We stated the principle of the greenhouse effect. After a great deal of searching around, we eventually obtained the absorption spectrum of various layers of the Earth's atmosphere. This allowed us to confirm that, if the skies remained clear, a doubling of CO2 concentration would cause the world to warm up by about 1.5°C.

But of course the skies don't remain clear. The formation of clouds is a strong function of surface temperature. If the world warms up, there will be more clouds. They will reflect more of the Sun's light, while at the same time, slowing down the radiation of heat into space by the Earth. To determine how these two effects would interact, we built our own climate model, which we called Circulating Cells.

When it comes to determining the effect of increased cloud cover, the most critical parameter to decide upon is the reflection of sunlight by clouds per millimeter of water depth in the cloud. It seemed to us that there should be a large body of literature written recently upon this subject because it is so important to climate modeling. The best paper we found upon the subject was written in 1948, Reflection, Absorption, and Transmission of Insolation by Stratus Cloud. We found a couple of more recent papers about reflection, such as this one, but they do not attempt to provide an empirical formula for the reflection of clouds with increasing cloud depth. We concluded that climatologists are not examining this issue in detail.

In a long sequence of small steps, we built up our climate model until it implemented surface convection, surface heat capacity, evaporation, cloud formation, precipitation, and radiation by clouds. We tested every aspect of the simulation in detail, and based its operating parameters upon our own estimates and upon whatever measurements we could find in climate science journals. We did not choose our model parameters to suit any hypothesis of our own, nor could we have done, because we did not have a model capable of testing the AGW hypothesis until the final stage, and we did not change the parameters in that final stage.

The latest version of our climate model shows that cloud cover increases rapidly as the surface warms above the freezing point of water. The evaporation rate of water from the surface increases approximately as the square of the temperature above freezing, and the only way for water to return to the surface is to form a cloud first. If we ignore the increased reflection of sunlight due to increasing cloud cover, and consider only the slowing-down of radiation into space by the same increase in cloud cover, our model shows roughly 3°C of warming due to a doubling in CO2 concentration. But when we take account of the increased reflection of sunlight by the increasing cloud cover, the warming drops to 0.9°C.

It seems to us that the climate models used by climatologists ignore the reflection of sunlight due to clouds. They may allow for some fixed fraction of sunlight to be reflected by clouds, but they do not allow this fraction to increase with increasing surface temperature. Thus they conclude that the warming due to CO2 doubling will be 3°C. If they took account of the increased reflection, the effect would be far smaller and less dramatic: roughly 1°C.

Doubling the CO2 concentration of the atmosphere will indeed encourage the world to warm up, but not by enough that we should worry. Right now CO2 concentration has increased from roughly 300 ppm to 400 ppm in the past century. If it gets to 600 ppm then we can say that the rise in CO2 concentration will tend to warm the Earth by 1°C. But we are unlikely to be able to check our calculations, because the natural variation in the Earth's climate is itself of order ±1°C from one century to the next.

And so we find ourselves at the end of our journey. Modern warming is not exceptional, and doubling the CO2 concentration will cause the world to warm up by roughly 1°C, not 3°C. The only part of the AGW theory we have not investigated is its assertion that human CO2 emissions are responsible for the increase in atmospheric CO2 concentration over the past century.

My thanks to those of you who took part in the effort, both by private e-mails and in the comments. I would not have continued the effort without your participation. I hope it is clear that my use of "we" instead of "I" is in recognition of the fact that this has been a group effort. I will continue to answer comments on this site, and I will consider any suggestions of further work. To the first approximation, however: we're done.

Thursday, March 15, 2012

Anthropogenic Global Warming

The Anthropogenic Global Warming (AGW) hypothesis states that doubling the CO2 concentration of the Earth's atmosphere will raise the average surface temperature of the Earth by a minimum of 1.5°C, and more likely 3°C.

In our investigation of the absorption and emission of long-wave radiation by the Earth's atmosphere, we calculated that a sudden doubling of CO2 concentration would decrease the power the Earth radiates into space by 6.6 W/m2. We then estimated how much the Earth and its atmosphere would have to warm up in order to restore the heat radiated into space to its original value. We assumed there would be no significant change in cloud cover as a result of the warming, and we applied Stefan's Law to calculate how the heat radiated by the Earth and its atmosphere would increase. We found that the required increase in surface temperature would be around 1.6°C. If there were no change in cloud cover, then the heat arriving from the Sun would remain the same, and we could expect the Earth to warm up by 1.6°C so as to once again arrive at thermal equilibrium.

The AGW hypothesis states that in the event of the Earth warming, changes in cloud cover will be such as to amplify the warming we calculate using Stefan's Law. Here is an extract from today's entry on Global Warming at Wikipedia.

The main positive feedback in the climate system is the water vapor feedback. The main negative feedback is radiative cooling through the Stefan–Boltzmann law, which increases as the fourth power of temperature.

As the Earth warms up, water evaporates more quickly from the oceans. Almost all water that evaporates must turn into clouds before it returns to Earth. Water condensing directly onto grass in the morning is an exception to this rule, but the vast majority of water vapor will return only as rain or snow, and so must first take the form of a cloud.

Clouds absorb long-wave radiation emitted by the Earth's surface, so the Earth cannot radiate its heat directly into space. Instead, the clouds radiate into space and warm the Earth with back radiation. Thus increasing cloud cover means less heat radiated into space for the same surface temperature. This is the positive feedback referred to by the AGW hypothesis. The AGW climate models predict that this positive feedback will amplify the minimum 1.5°C warming caused by CO2 to roughly 3°C. Some say 2°C and other say 4°C, but all agree that the actual warming will be greater than 1.5°C.

We see this positive feedback in our Circulating Cells simulation, version CC11, which simulates the formation of clouds as well as their absorption and emission of long-wave radiation. The graph below shows a close-up of the behavior of the simulation in the neighborhood of its equilibrium point for 350 W/m2 solar power.

The blue line shows how the power that penetrates to the surface of our simulated planet varies with increasing surface temperature. The orange line shows how the total power escaping from our simulated planet increases with surface temperature. These two lines cross at a, where temperature is 288 K and total escaping power is 290 W/m2. Our simulated atmosphere absorbs 50% of long-wave radiation, which is an adequate approximation of our atmosphere with its current concentration of CO2 (roughly 330 ppm).

The green line is the same as the orange line, but displaced down by 6.6 W/m2, which is the amount by which we calculated the total power escaping from the Earth will decrease if we double CO2 concentration (to roughly 660 ppm). Thus the green line tells us the total escaping power at the same temperature if we were to double the CO2 concentration. Point b on the green line is 288 K, and the total escaping power is 283.4 W/m2.

The purple line shows how the total escaping power will increase from b if we assume the cloud cover is constant and use only Stefan's Law to determine the heat radiated into space by the surface and atmosphere. The red line shows the solar power penetrating to the surface if we assume the cloud cover is constant. With constant cloud cover, the penetrating solar power does not change.

The purple and red lines meet at c, which is 289.6 K, or 1.6°C above the previous equilibrium point. Thus our simulation shows us that the warming due to CO2 doubling, if we ignore changes in cloud cover, will be 1.6°C, which is consistent with our previous calculation.

The green line, however, is the simulation's calculation of the total escaping power for increasing surface temperature. We see that the heat radiated into space does not increase as quickly as Stefan's Law would lead us to expect. And the reason for that is precisely the reason quoted by the AGW hypothesis: increasing cloud cover is slowing down the radiation of heat into space. The green line and the red line intersect at d, which is 290.7 K, or 2.7°C above our original equilibrium temperature. This is the new equilibrium temperature of the planet surface if we double CO2 concentration and we assume that there will be no change in the solar power penetrating to the surface while the cloud cover increases.

But the solar power penetrating to the surface must decrease as cloud cover increases. Clouds reflect sunlight. Thick clouds reflect 90% of solar power back into space. Even thin, high clouds reflect 10%. Increasing cloud cover will decrease the solar power penetrating to the surface. That is why our blue line slopes downwards. This is negative feedback, which acts against the positive feedback described by the AGW theory. The blue line shows how the solar power penetrating to the surface decreases as our cloud cover increases.

The blue line and the green line intersect at e, which is the equilibrium point we arrive at after doubling the CO2 concentration and considering both the positive feedback of back-radiation and the negative feedback of solar reflection. The temperature at e is 288.9 K, which is 0.9°C above our original equilibrium temperature.

Thus our simulation shows how the negative feedback generated by clouds dominates their positive feedback, and suggests that the actual warming of the Earth's surface due to a doubling of CO2 will be closer to 0.9°C than the 3°C predicted by the AGW hypothesis.

In our investigation of the absorption and emission of long-wave radiation by the Earth's atmosphere, we calculated that a sudden doubling of CO2 concentration would decrease the power the Earth radiates into space by 6.6 W/m2. We then estimated how much the Earth and its atmosphere would have to warm up in order to restore the heat radiated into space to its original value. We assumed there would be no significant change in cloud cover as a result of the warming, and we applied Stefan's Law to calculate how the heat radiated by the Earth and its atmosphere would increase. We found that the required increase in surface temperature would be around 1.6°C. If there were no change in cloud cover, then the heat arriving from the Sun would remain the same, and we could expect the Earth to warm up by 1.6°C so as to once again arrive at thermal equilibrium.

The AGW hypothesis states that in the event of the Earth warming, changes in cloud cover will be such as to amplify the warming we calculate using Stefan's Law. Here is an extract from today's entry on Global Warming at Wikipedia.

The main positive feedback in the climate system is the water vapor feedback. The main negative feedback is radiative cooling through the Stefan–Boltzmann law, which increases as the fourth power of temperature.

As the Earth warms up, water evaporates more quickly from the oceans. Almost all water that evaporates must turn into clouds before it returns to Earth. Water condensing directly onto grass in the morning is an exception to this rule, but the vast majority of water vapor will return only as rain or snow, and so must first take the form of a cloud.

Clouds absorb long-wave radiation emitted by the Earth's surface, so the Earth cannot radiate its heat directly into space. Instead, the clouds radiate into space and warm the Earth with back radiation. Thus increasing cloud cover means less heat radiated into space for the same surface temperature. This is the positive feedback referred to by the AGW hypothesis. The AGW climate models predict that this positive feedback will amplify the minimum 1.5°C warming caused by CO2 to roughly 3°C. Some say 2°C and other say 4°C, but all agree that the actual warming will be greater than 1.5°C.

We see this positive feedback in our Circulating Cells simulation, version CC11, which simulates the formation of clouds as well as their absorption and emission of long-wave radiation. The graph below shows a close-up of the behavior of the simulation in the neighborhood of its equilibrium point for 350 W/m2 solar power.

The blue line shows how the power that penetrates to the surface of our simulated planet varies with increasing surface temperature. The orange line shows how the total power escaping from our simulated planet increases with surface temperature. These two lines cross at a, where temperature is 288 K and total escaping power is 290 W/m2. Our simulated atmosphere absorbs 50% of long-wave radiation, which is an adequate approximation of our atmosphere with its current concentration of CO2 (roughly 330 ppm).

The green line is the same as the orange line, but displaced down by 6.6 W/m2, which is the amount by which we calculated the total power escaping from the Earth will decrease if we double CO2 concentration (to roughly 660 ppm). Thus the green line tells us the total escaping power at the same temperature if we were to double the CO2 concentration. Point b on the green line is 288 K, and the total escaping power is 283.4 W/m2.

The purple line shows how the total escaping power will increase from b if we assume the cloud cover is constant and use only Stefan's Law to determine the heat radiated into space by the surface and atmosphere. The red line shows the solar power penetrating to the surface if we assume the cloud cover is constant. With constant cloud cover, the penetrating solar power does not change.

The purple and red lines meet at c, which is 289.6 K, or 1.6°C above the previous equilibrium point. Thus our simulation shows us that the warming due to CO2 doubling, if we ignore changes in cloud cover, will be 1.6°C, which is consistent with our previous calculation.

The green line, however, is the simulation's calculation of the total escaping power for increasing surface temperature. We see that the heat radiated into space does not increase as quickly as Stefan's Law would lead us to expect. And the reason for that is precisely the reason quoted by the AGW hypothesis: increasing cloud cover is slowing down the radiation of heat into space. The green line and the red line intersect at d, which is 290.7 K, or 2.7°C above our original equilibrium temperature. This is the new equilibrium temperature of the planet surface if we double CO2 concentration and we assume that there will be no change in the solar power penetrating to the surface while the cloud cover increases.

But the solar power penetrating to the surface must decrease as cloud cover increases. Clouds reflect sunlight. Thick clouds reflect 90% of solar power back into space. Even thin, high clouds reflect 10%. Increasing cloud cover will decrease the solar power penetrating to the surface. That is why our blue line slopes downwards. This is negative feedback, which acts against the positive feedback described by the AGW theory. The blue line shows how the solar power penetrating to the surface decreases as our cloud cover increases.

The blue line and the green line intersect at e, which is the equilibrium point we arrive at after doubling the CO2 concentration and considering both the positive feedback of back-radiation and the negative feedback of solar reflection. The temperature at e is 288.9 K, which is 0.9°C above our original equilibrium temperature.

Thus our simulation shows how the negative feedback generated by clouds dominates their positive feedback, and suggests that the actual warming of the Earth's surface due to a doubling of CO2 will be closer to 0.9°C than the 3°C predicted by the AGW hypothesis.

Labels:

Climate Models,

Global Surface,

Greenhouse Effect,

Water Vapor

Thursday, February 23, 2012

Solar Increase Continued

Today we continue our previous post without any preamble. The graph below shows how our simulated atmosphere warms up as we increase the solar power from one hundred to twelve hundred Watts per square meter. The green line shows how we increased the solar power over the course of twelve simulated years. The blue line shows how the power penetrating to the surface varied with time. The red line is the average temperature of the air resting upon the surface of our simulated planet.

At first, when the sky is clear, the solar power and the penetrating power are equal. But when the solar power reaches 300 W/m2 clouds form and the penetrating power drops below the solar power. As solar power increases from 600 W/m2 to 1200 W/m2, fluctuations in the penetrating power double in their extent, but the average penetrating power appears to remain unchanged. The negative feedback generated by the evaporation cycle is so powerful that the surface air temperature increases by only a few degrees while we double the solar power.

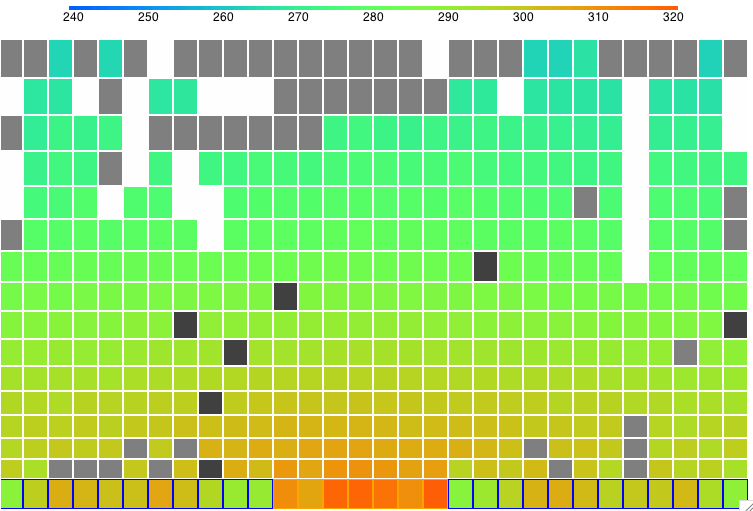

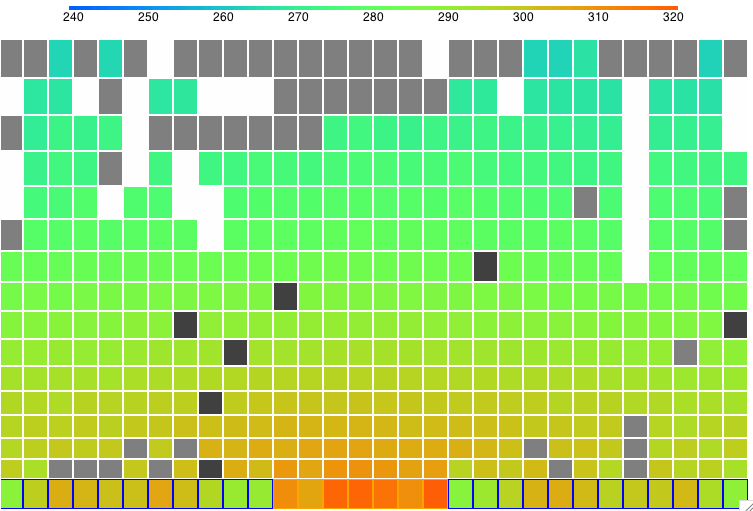

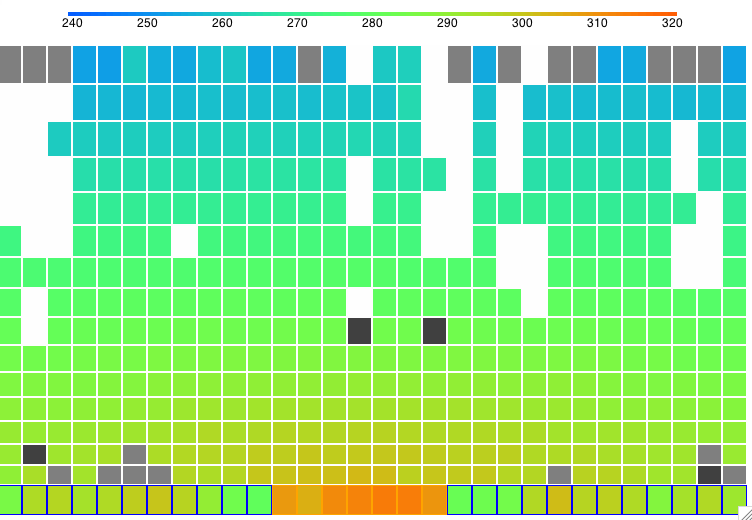

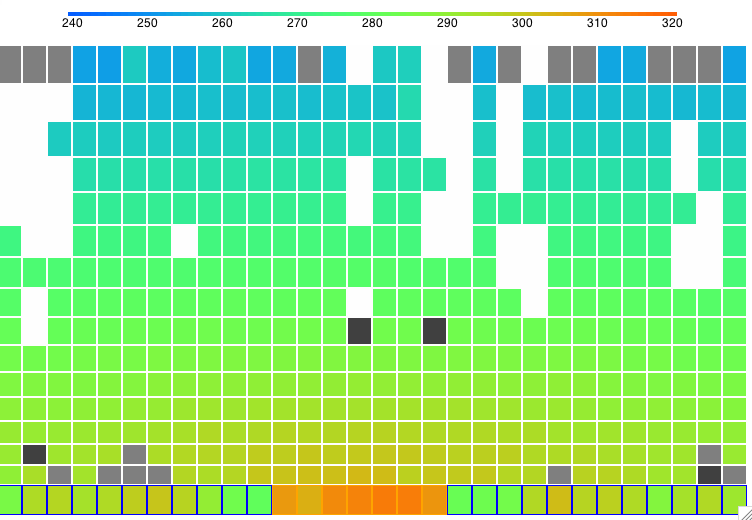

The following screen shot shows the state of the simulation after two thousand hours at 1210 W/m2. You can download this state as a text file SIC_1210W and load it into CC11 to watch the vigorous formation of clouds and descent of precipitation.

We now have the data we need to plot graphs of surface temperature and other properties of the simulation versus solar power.

At first, when the sky is clear, the solar power and the penetrating power are equal. But when the solar power reaches 300 W/m2 clouds form and the penetrating power drops below the solar power. As solar power increases from 600 W/m2 to 1200 W/m2, fluctuations in the penetrating power double in their extent, but the average penetrating power appears to remain unchanged. The negative feedback generated by the evaporation cycle is so powerful that the surface air temperature increases by only a few degrees while we double the solar power.

The following screen shot shows the state of the simulation after two thousand hours at 1210 W/m2. You can download this state as a text file SIC_1210W and load it into CC11 to watch the vigorous formation of clouds and descent of precipitation.

We now have the data we need to plot graphs of surface temperature and other properties of the simulation versus solar power.

Labels:

Climate Models,

Global Surface,

Greenhouse Effect,

Water Vapor

Monday, February 13, 2012

Solar Increase

In our previous post we cooled down our simulated atmosphere by reducing the incoming solar power to 100 W/m2. We gave the simulation plenty of time to reach equilibrium, and it arrived at the state RC_100W. The sky is clear. All the Sun's power penetrates to the surface. The surface temperature is −50°C.

We started CC11 from this equilibrium state. We increased the solar power to 110 W/m2. After two thousand hours of simulated time, we increased the solar power to 130 W/m2. We ran for another two thousand hours of simulated time, after which we increased the solar power again. The program performs the solar Increases automatically, so we were able to walk away from the computer and let the simulation run on its own.

The following graph shows surface temperature and solar power plotted against simulation time in weeks. The purpose of this plot is to determine if our simulation reaches equilibrium at each value of solar power.

Below 270 K, we see see the surface temperature is still increasing two thousand hours after the increases in solar power. Perhaps ten thousand hours at each step would be enough. But we are faced with a practical problem of execution time: it took one full week for us to obtain the graph above, with a computer dedicated to the job running continuously.

Once the surface temperature gets above 270 K, however, the evaporation cycle starts up and we see the effects of negative feedback. The changes that result from increases in solar power are markedly smaller and they establish themselves more quickly. Thus our program of solar Increase appears to be gradual enough for temperatures above 270 K, and these are the temperatures we are most interested in.

The following figure shows the equilibrium state of the atmosphere with 490 W/m2 solar power. The running simulation shows vigorous cloud formation and precipitation. You can view this for yourself by downloading CC11, loading state SI_490 into the program, setting Q_sun to 490 in the configuration panel, and pressing Run.

By next week, we hope to have obtained the equilibrium state of our simulation for solar powers 100 W/m2 all the way up to 900 W/m2.

We started CC11 from this equilibrium state. We increased the solar power to 110 W/m2. After two thousand hours of simulated time, we increased the solar power to 130 W/m2. We ran for another two thousand hours of simulated time, after which we increased the solar power again. The program performs the solar Increases automatically, so we were able to walk away from the computer and let the simulation run on its own.

The following graph shows surface temperature and solar power plotted against simulation time in weeks. The purpose of this plot is to determine if our simulation reaches equilibrium at each value of solar power.

Below 270 K, we see see the surface temperature is still increasing two thousand hours after the increases in solar power. Perhaps ten thousand hours at each step would be enough. But we are faced with a practical problem of execution time: it took one full week for us to obtain the graph above, with a computer dedicated to the job running continuously.

Once the surface temperature gets above 270 K, however, the evaporation cycle starts up and we see the effects of negative feedback. The changes that result from increases in solar power are markedly smaller and they establish themselves more quickly. Thus our program of solar Increase appears to be gradual enough for temperatures above 270 K, and these are the temperatures we are most interested in.

The following figure shows the equilibrium state of the atmosphere with 490 W/m2 solar power. The running simulation shows vigorous cloud formation and precipitation. You can view this for yourself by downloading CC11, loading state SI_490 into the program, setting Q_sun to 490 in the configuration panel, and pressing Run.

By next week, we hope to have obtained the equilibrium state of our simulation for solar powers 100 W/m2 all the way up to 900 W/m2.

Labels:

Climate Models,

Global Surface,

Water Vapor

Monday, February 6, 2012

A Big Chill

In Negative Feeback, we increased our simulation's incoming solar power from 200 W/m2 to 400 W/m2 and saw the surface warm by only a 5°C. It turned out that the increasing solar power was almost entirely compensated for by an increase in cloud thickness, so that the power penetrating to the surface remained almost constant. We now ask ourselves, what will happen if we drop the solar power all the way down to 100 W/m2? Will our Radiating Clouds be able to stop the world from cooling?

We started our Circulating Cells program (CC11) in its equilibrium state for 350 W/m2 solar power (RC_14000hr), and reduced the solar power to 100 W/m2. The following graph shows surface air temperature and atmospheric cloud depth for the first five hundred hours.

In the first ten hours, the surface temperature drops by a few degrees. This is what we might expect during one of Earth's nights. After three hundred hours, the surface temperature has dropped to −5°C and there are hardly any clouds left in the sky. The following graph shows the world cooling down to a new, clear-sky equilibrium of −50°C. The graph also shows the solar power penetrating to the surface. Once the sky clears, the penetration is exactly equal to 100 W/m2. We saved the final state of the atmosphere in RC_100W.

We conclude that 100 W/m2 is insufficient solar power to warm the Earth above the freezing point of water, and therefore insufficient solar power to generate the cycle of evaporation and precipitation that stabilizes our climate.

We started our Circulating Cells program (CC11) in its equilibrium state for 350 W/m2 solar power (RC_14000hr), and reduced the solar power to 100 W/m2. The following graph shows surface air temperature and atmospheric cloud depth for the first five hundred hours.

In the first ten hours, the surface temperature drops by a few degrees. This is what we might expect during one of Earth's nights. After three hundred hours, the surface temperature has dropped to −5°C and there are hardly any clouds left in the sky. The following graph shows the world cooling down to a new, clear-sky equilibrium of −50°C. The graph also shows the solar power penetrating to the surface. Once the sky clears, the penetration is exactly equal to 100 W/m2. We saved the final state of the atmosphere in RC_100W.

We conclude that 100 W/m2 is insufficient solar power to warm the Earth above the freezing point of water, and therefore insufficient solar power to generate the cycle of evaporation and precipitation that stabilizes our climate.

Monday, November 28, 2011

Less Reflection

With 350 W/m2 arriving from the Sun, 75% of the surface covered by water, clouds sinking at 300 mm/s, and each 3 mm of cloud reflecting 63% of sunlight, our CC9 simulation converges upon a surface air temperature of −12°C. When we increase the Sun's power to 400 W/m2, the temperature rises by a mere 0.5°C. Our simulated planet is kept cold by thick clouds that reflect the Sun's light back into space. Ice crystals drift down from the sky in some places, while elsewhere water evaporates from the frozen seas.

The surface of the Earth is at an average temperature well above the freezing point of water, and the Earth's sky is frequently clear of clouds. Our simulated sky never clears, and the surface is frozen. It never rains in our simulation, nor do our simulated clouds emit or absorb radiation. Perhaps these two omissions are responsible for our permanent clouds and frozen seas. Before we attempt to rectify them, however, let us consider the effect of decreasing the reflecting power of our simulated clouds.

We increased Lc_water from 3.0 mm to 6.0 mm, so that it now takes 6.0 mm of cloud water to reflect 63% of the Sun's light. With the reflecting power divided in half, we ran our simulation for eight thousand hours from the starting point CS_0hr. You will find the final state in LR_8000hr.

Compared to before, we now have more clouds in the sky. The following graph shows how cloud depth and surface air temperature vary with time.

Compared to before, we see the atmosphere reaches equilibrium in on third the time. The new temperature is higher and the cloud cover is thicker. The following table compares the state of the atmosphere for both types of clouds.

Our seas are now at −3°C. If they contain salt, they will not freeze. The air a few meters above our sandy island will be just below freezing. Our simulated world is still much colder than the Earth, and nobody standing on the island would ever see the Sun. We are, however, gratified to find that our simulation remains stable with such a large drop in cloud reflectance.

The surface of the Earth is at an average temperature well above the freezing point of water, and the Earth's sky is frequently clear of clouds. Our simulated sky never clears, and the surface is frozen. It never rains in our simulation, nor do our simulated clouds emit or absorb radiation. Perhaps these two omissions are responsible for our permanent clouds and frozen seas. Before we attempt to rectify them, however, let us consider the effect of decreasing the reflecting power of our simulated clouds.

We increased Lc_water from 3.0 mm to 6.0 mm, so that it now takes 6.0 mm of cloud water to reflect 63% of the Sun's light. With the reflecting power divided in half, we ran our simulation for eight thousand hours from the starting point CS_0hr. You will find the final state in LR_8000hr.

Compared to before, we now have more clouds in the sky. The following graph shows how cloud depth and surface air temperature vary with time.

Compared to before, we see the atmosphere reaches equilibrium in on third the time. The new temperature is higher and the cloud cover is thicker. The following table compares the state of the atmosphere for both types of clouds.

Our seas are now at −3°C. If they contain salt, they will not freeze. The air a few meters above our sandy island will be just below freezing. Our simulated world is still much colder than the Earth, and nobody standing on the island would ever see the Sun. We are, however, gratified to find that our simulation remains stable with such a large drop in cloud reflectance.

Labels:

Climate Models,

Global Surface,

Greenhouse Effect,

Water Vapor

Tuesday, November 22, 2011

Negative Feedback

With fast-sinking clouds, our circulating cells program reaches equilibrium in eight hundred hours of simulation time. With the 350 W/m2 arriving continuously from the Sun, the surface air temperature settles to 261 K.

If we increase the power arriving from the sun, it seems reasonable to suppose that the surface temperature of our planet will rise. Indeed, before we added clouds to our simulation, we could use Stefan's Law to answer this question. The planet surface absorbed all the Sun's heat and the surface and tropopause radiated it all back into space, so if we increased Solar power by 4%, the absolute temperature of the surface and the tropopause would increase by 1%. But with clouds reflecting light from the Sun, we can no longer assume that all the Sun's heat will be absorbed, nor even that a constant fraction of it will be absorbed.

We ran our fast-sinking clouds simulation repeatedly from the same CS_0hr starting conditions, each time with a different Solar power. Each time we stopped the simulation after a thousand simulated hours, so that we could be sure it had reached equilibrium, and recorded the surface air temperature. We obtained the following graph.

Without clouds, a doubling of Solar power would cause the surface temperature to increase by roughly 20%. Here we increase Solar power from 200 W/m2 to 400 W/m2 and the surface temperature increases by only 1.5%. The following graph shows how the cloud depth increases with Solar power, thus decreasing the fraction of Solar power that penetrates to the surface.

The Sun's light, arriving at the surface, causes evaporation. This evaporation leads to clouds. But these same clouds reflect the Sun's light back into space. Thus one effect of Sunlight arriving at the surface is to reduce the amount of Sunlight arriving at the surface. The effect of clouds is an example of negative feedback. This negative feedback reduces the sensitivity of surface temperature to Solar power by more than a factor of ten.

If we increase the power arriving from the sun, it seems reasonable to suppose that the surface temperature of our planet will rise. Indeed, before we added clouds to our simulation, we could use Stefan's Law to answer this question. The planet surface absorbed all the Sun's heat and the surface and tropopause radiated it all back into space, so if we increased Solar power by 4%, the absolute temperature of the surface and the tropopause would increase by 1%. But with clouds reflecting light from the Sun, we can no longer assume that all the Sun's heat will be absorbed, nor even that a constant fraction of it will be absorbed.

We ran our fast-sinking clouds simulation repeatedly from the same CS_0hr starting conditions, each time with a different Solar power. Each time we stopped the simulation after a thousand simulated hours, so that we could be sure it had reached equilibrium, and recorded the surface air temperature. We obtained the following graph.

Without clouds, a doubling of Solar power would cause the surface temperature to increase by roughly 20%. Here we increase Solar power from 200 W/m2 to 400 W/m2 and the surface temperature increases by only 1.5%. The following graph shows how the cloud depth increases with Solar power, thus decreasing the fraction of Solar power that penetrates to the surface.

The Sun's light, arriving at the surface, causes evaporation. This evaporation leads to clouds. But these same clouds reflect the Sun's light back into space. Thus one effect of Sunlight arriving at the surface is to reduce the amount of Sunlight arriving at the surface. The effect of clouds is an example of negative feedback. This negative feedback reduces the sensitivity of surface temperature to Solar power by more than a factor of ten.

Tuesday, April 12, 2011

The BEST Project

The Berkeley Earth Surface Temperature (BEST) has set out to re-calculate the global surface temperature trend. They describe the basis of their calculation here.

The first thing BEST addresses when they describe their calculation is the number of available weather stations, and how many of they should use to calculate a trend. We considered the same problem at length here and in brief here. According to BEST, there are around fifteen thousand weather stations reporting daily from 1970 to 2010. The Climatic Research Unit (CRU), the National Climatic Data Center (NCDC), and we ourselves based our calculations upon the GHCN station data. According to BEST, the GHCN data includes fewer than one in ten of the stations that recorded temperatures in 2000. The BEST team hope to use a greater fraction of the available stations.

BEST provided testimony to the US congress on 31st March. They have already applied their basic calculation to 2% of the fifteen thousand stations available in the period 1970 to 2010. They made no effort to correct for systematic errors like urban heating. And yet they arrive at a global surface temperature trend almost identical to that of CRU and NCDC. Here's what they have to say about this agreement.

The Berkeley Earth agreement with the prior analysis surprised us, since our preliminary results don’t yet address many of the known biases. When they do, it is possible that the corrections could bring our current agreement into disagreement. Why such close agreement between our uncorrected data and their adjusted data? One possibility is that the systematic corrections applied by the other groups are small. We don’t yet know.

We were just as surprised when we reproduced the CRU trend from the GHCN data by integrated derivatives. We used no reference grid. We used no corrections for systematic errors.

NASA estimates the urban heating effect in US weather stations to be roughly half a degree centigrade during the twentieth century (see our post here and NASA's paper here). But CRU claims that urban heating is either negligible or has been accounted for in their efforts. And NASA applies 0.5°C corrections and goes on to say that the corrected trend still shows a rise of 0.5°C in the twentieth century, and they claim this trend is accurate to ±0.1°C. We find all this confusing, and so do the people at BEST, which is why they are re-calculating the trend.

The GHCN inclusion criteria are another potential source of systematic bias in the trend. If we select stations from within the GHCN data set according to various criteria that appear to have nothing to do with temperature, we find that the trend alters in a significant way. The graph below shows the trend we obtain if we select from the GHCN data set only those stations that are reporting for at least 80% of the years between 1960-2000. We get a trend in which the 1930's are as warm as the 1990's.

We await with interest the BEST project's investigation of the effect of urban heating and station inclusion. We look forward to examining their trend-calculation algorithm. We have argued before that all such methods, with or without a reference grid, are pretty much equivalent, so we expect them to come up with something similar to the CRU and NCDC trends before they start to correct for systematic errors.

How BEST will correct for systematic errors in any meaningful or accurate way, we cannot say. If it was up to us, we would select long-lived stations in rural locations, and use the trends from those, even if there were only a dozen of them. But we have tried that already, and we get plots like the one above. The 1930s were hot, and it's just as hot now. But not especially hot.

The first thing BEST addresses when they describe their calculation is the number of available weather stations, and how many of they should use to calculate a trend. We considered the same problem at length here and in brief here. According to BEST, there are around fifteen thousand weather stations reporting daily from 1970 to 2010. The Climatic Research Unit (CRU), the National Climatic Data Center (NCDC), and we ourselves based our calculations upon the GHCN station data. According to BEST, the GHCN data includes fewer than one in ten of the stations that recorded temperatures in 2000. The BEST team hope to use a greater fraction of the available stations.

BEST provided testimony to the US congress on 31st March. They have already applied their basic calculation to 2% of the fifteen thousand stations available in the period 1970 to 2010. They made no effort to correct for systematic errors like urban heating. And yet they arrive at a global surface temperature trend almost identical to that of CRU and NCDC. Here's what they have to say about this agreement.

The Berkeley Earth agreement with the prior analysis surprised us, since our preliminary results don’t yet address many of the known biases. When they do, it is possible that the corrections could bring our current agreement into disagreement. Why such close agreement between our uncorrected data and their adjusted data? One possibility is that the systematic corrections applied by the other groups are small. We don’t yet know.

We were just as surprised when we reproduced the CRU trend from the GHCN data by integrated derivatives. We used no reference grid. We used no corrections for systematic errors.

NASA estimates the urban heating effect in US weather stations to be roughly half a degree centigrade during the twentieth century (see our post here and NASA's paper here). But CRU claims that urban heating is either negligible or has been accounted for in their efforts. And NASA applies 0.5°C corrections and goes on to say that the corrected trend still shows a rise of 0.5°C in the twentieth century, and they claim this trend is accurate to ±0.1°C. We find all this confusing, and so do the people at BEST, which is why they are re-calculating the trend.

The GHCN inclusion criteria are another potential source of systematic bias in the trend. If we select stations from within the GHCN data set according to various criteria that appear to have nothing to do with temperature, we find that the trend alters in a significant way. The graph below shows the trend we obtain if we select from the GHCN data set only those stations that are reporting for at least 80% of the years between 1960-2000. We get a trend in which the 1930's are as warm as the 1990's.

We await with interest the BEST project's investigation of the effect of urban heating and station inclusion. We look forward to examining their trend-calculation algorithm. We have argued before that all such methods, with or without a reference grid, are pretty much equivalent, so we expect them to come up with something similar to the CRU and NCDC trends before they start to correct for systematic errors.

How BEST will correct for systematic errors in any meaningful or accurate way, we cannot say. If it was up to us, we would select long-lived stations in rural locations, and use the trends from those, even if there were only a dozen of them. But we have tried that already, and we get plots like the one above. The 1930s were hot, and it's just as hot now. But not especially hot.

Tuesday, January 11, 2011

Mercury Bulb, Part II

Suppose we expose our mercury bulb thermometer to radiation from the Sun and the ground, as shown below. We have short-wave radiation arriving from the Sun far away, long-wave radiation arriving from the ground just below, and the bulb radiating as well. If we assume the air below the bulb is transparent, the heat the bulb receives from the ground is equal to the heat it would receive from a hemispherical shell of the same material.

The ground and the bulb are opaque to short-wave and long-wave radiation. The emissivity of mercury is roughly 0.10 for short-wave and long-wave, while that of sand and dirt is 0.90 for both short-wave and long-wave.

In the following calculations, we use the letter ε for emissivity, and a subscript to indicate ground or bulb. The radius of the bulb is r. We calculate the net absorption of radiation by the bulb, in Watts. We assume that this extra heat will cause a slight increase in the bulb's temperature over the temperature of the air, leading to conduction of the excess heat away from the bulb. We apply the equation for steady-state conduction we derived last time, and so arrive at an equation for the change in the bulb temperature in terms of power arriving from the sun, ground temperature, and air temperature.

In Arizona over Thanksgiving, we observed the ground to be at around 320 K at mid-day and the air at 300 K. In Solar Heat, we showed that the power arriving from the sun is 1,400 W for each square meter we hold up perpendicular to its rays. A thermometer exposed to the sun and the ground under these conditions will read 1.5°C warmer than the air. At night, we observed the air and the ground to drop to around 280 K. A thermometer free to radiate its heat into space under these conditions will read 1.5°C colder than the air.

Suppose we house our thermometer in a box with holes on the sides to let air through, but a solid roof and floor. We make the box out of a good insulator, like wood. Air can move freely through the box, but radiation from the ground and the sun are blocked. The outside of the box may get hot during the day or cold at night, but the inside surface will settle to the air temperature, along with the thermometer bulb. The radiation the bulb receives from the box will be exactly equal to the radiation it emits towards the box.

During the day, the ground warms the air. In order for heat to pass from the ground to the air, there must be a sharp temperature gradient near the surface, because the conductivity of air is so poor. If we place our thermometer one meter above the surface, it will be out of this heating layer, and so measure the temperature of the main body of air moving along the ground. When the wind blows, the thermometer will be more effective at measuring air temperature, because the movement of air around the bulb greatly facilitates the transfer of heat to and from the bulb. But once again, the wind near the ground can be erratic, or shielded by obstacles, so placing the thermometer a meter above the ground would make sure that it experiences the temperature of the main body of moving air.

We conclude that a thermometer mounted in a perforated box one meter above the ground will give us an accurate measurement of air temperature, despite the effects of radiation, conduction, and convection.

The ground and the bulb are opaque to short-wave and long-wave radiation. The emissivity of mercury is roughly 0.10 for short-wave and long-wave, while that of sand and dirt is 0.90 for both short-wave and long-wave.

In the following calculations, we use the letter ε for emissivity, and a subscript to indicate ground or bulb. The radius of the bulb is r. We calculate the net absorption of radiation by the bulb, in Watts. We assume that this extra heat will cause a slight increase in the bulb's temperature over the temperature of the air, leading to conduction of the excess heat away from the bulb. We apply the equation for steady-state conduction we derived last time, and so arrive at an equation for the change in the bulb temperature in terms of power arriving from the sun, ground temperature, and air temperature.

In Arizona over Thanksgiving, we observed the ground to be at around 320 K at mid-day and the air at 300 K. In Solar Heat, we showed that the power arriving from the sun is 1,400 W for each square meter we hold up perpendicular to its rays. A thermometer exposed to the sun and the ground under these conditions will read 1.5°C warmer than the air. At night, we observed the air and the ground to drop to around 280 K. A thermometer free to radiate its heat into space under these conditions will read 1.5°C colder than the air.

Suppose we house our thermometer in a box with holes on the sides to let air through, but a solid roof and floor. We make the box out of a good insulator, like wood. Air can move freely through the box, but radiation from the ground and the sun are blocked. The outside of the box may get hot during the day or cold at night, but the inside surface will settle to the air temperature, along with the thermometer bulb. The radiation the bulb receives from the box will be exactly equal to the radiation it emits towards the box.

During the day, the ground warms the air. In order for heat to pass from the ground to the air, there must be a sharp temperature gradient near the surface, because the conductivity of air is so poor. If we place our thermometer one meter above the surface, it will be out of this heating layer, and so measure the temperature of the main body of air moving along the ground. When the wind blows, the thermometer will be more effective at measuring air temperature, because the movement of air around the bulb greatly facilitates the transfer of heat to and from the bulb. But once again, the wind near the ground can be erratic, or shielded by obstacles, so placing the thermometer a meter above the ground would make sure that it experiences the temperature of the main body of moving air.

We conclude that a thermometer mounted in a perforated box one meter above the ground will give us an accurate measurement of air temperature, despite the effects of radiation, conduction, and convection.

Monday, January 3, 2011

Mercury Bulb, Part I

When the temperature recorded by a thermometer two meters above the desert drops by 10°C in an hour, what does this drop mean? Does it mean that the air has cooled down, or does it mean that the radiating bodies around it have cooled down? When we feel cold walking in the desert at night, is this because the air is cold, or is it because the sand all around us is no longer radiating heat that would otherwise warm our bodies? So far, we have assumed that it is the air that cooled down. In any case, we feel it is time to consider the role played by conduction, radiation, and convection in determining the temperature recorded by a mercury bulb thermometer.

Let us start with conduction. We will simplify the conduction question by considering steady-state heat flow out of a spherical mercury bulb with thin walls. By "steady-state" we mean that no element in our problem is either warming up or cooling down. The heat conducted away from the bulb is constant. The advantage of this simplification is that it has a simple analytical solution, as we show in the following diagram.

Meanwhile, the heat capacity of the bulb allows us to relate the heat flow out of the bulb to the rate at which the temperature of the bulb must be falling, as we show below. In our continuing equations, we use T and r for the temperature and radius of the bulb.

The following graph plots the temperature of mercury bulbs of various radii versus time after a sudden 10°C drop in temperature. We see that a bulb of radius 2 mm will cool to within 0.5°C of the new air temperature within a few minutes.

Most mercury bulbs are a few millimeters in diameter, so it appears that mercury thermometers can measure air temperature accurately provided that the changes in air temperature occur on a time scale of ten minutes or longer. In our next post we will consider whether radiation from the ground, the sun, and the bulb itself will disturb the thermometer's measurement of ambient air temperature.

Let us start with conduction. We will simplify the conduction question by considering steady-state heat flow out of a spherical mercury bulb with thin walls. By "steady-state" we mean that no element in our problem is either warming up or cooling down. The heat conducted away from the bulb is constant. The advantage of this simplification is that it has a simple analytical solution, as we show in the following diagram.

Meanwhile, the heat capacity of the bulb allows us to relate the heat flow out of the bulb to the rate at which the temperature of the bulb must be falling, as we show below. In our continuing equations, we use T and r for the temperature and radius of the bulb.

The following graph plots the temperature of mercury bulbs of various radii versus time after a sudden 10°C drop in temperature. We see that a bulb of radius 2 mm will cool to within 0.5°C of the new air temperature within a few minutes.

Most mercury bulbs are a few millimeters in diameter, so it appears that mercury thermometers can measure air temperature accurately provided that the changes in air temperature occur on a time scale of ten minutes or longer. In our next post we will consider whether radiation from the ground, the sun, and the bulb itself will disturb the thermometer's measurement of ambient air temperature.

Monday, August 23, 2010

First Differences

Over at Climate Audit, Hu McCulloch as a guest post The First Differences Method that describes a simple way to calculate the global surface trend from station data. This method turns out to be same as the Integrated Derivative Method we describe in our Climate Analysis essay. I said as much in the comments of Hu's post, and pointed to our Continuous Stations plot, which we generated with our Integrated Derivative Method.

Wednesday, July 28, 2010

Hockey Stick Defenders

I had a look through this defense of the Hockey Stick over at Realclimate. The author is Tamino. Here he writes at length defending Michael Mann's hockey stick graph against Steve McIntyre, Andrew Monfort, and others. He insults their integrity and character. I'm not surprised. Tamino called me an idiot and blocked me from commenting on his website when I asked, "Has there been any statistically significant warming in the past twenty years?"

Steve McIntyre has his own answer to Tamino's post. He concentrates upon how Tamino mis-quotes him.

But here's my answer to Tamino. The central argument against the Hockey Stick, as put forward by Steve McIntyre and Ross McKitrick, and confirmed by the Wegman Repot for the US congress, is that Michael Mann's data analysis generates the hockey stick from random proxy data. We explain how his analysis produces the hockey stick here, and I first posted about the effect here.